Let’s be real for a second: we are all a little bit addicted to the “green checkmark.”

If you work in digital marketing, your morning probably starts the same way mine does. You log into a dashboard, see a “Site Audit” score of 85%, and immediately start hunting for those missing meta descriptions or broken images to get that score up to a 100. It feels like progress. It looks like “Technical SEO.”

But here is the uncomfortable truth: Most SEO tools are lying to you by omission.

We have entered an era of “Tool-Led SEO” where we spend more time satisfying a software’s proprietary algorithm than Google’s actual ranking systems. This is the biggest blind spot in the industry today. We are so focused on the simulation that we’ve lost sight of the ground truth.

To win in 2026, you have to move beyond the dashboard. You need a data-driven strategy that balances what the bots see, what the users do, and what the server actually says.

The “Tool-First” Trap: Why Your 100/100 Score is Often Meaningless

In the world of search engine optimization, we love metrics. We love “Domain Authority,” “Keyword Difficulty,” and “Optimization Scores.” But these are all third-party inventions. Google doesn’t have a “DA” score. Google doesn’t care if your third-party tool thinks your keyword density is too high.

The “Tool-First” trap happens when you treat a software’s recommendations as a definitive roadmap. These tools use their own crawlers (not Google’s) to simulate a visit. They are essentially a “sketch” of your website, not a photograph.

The Real-World Mismatch

I once consulted for a massive e-commerce brand that had a “Technical Health Score” of 98%. On paper, they were perfect. Yet, their organic traffic was cratering.

When we stopped looking at the tool and started looking at the server logs, we found the problem: the site’s JavaScript was so heavy that Googlebot was “timing out” after five seconds. The SEO tool—which didn’t have a rendering limit—was happily crawling everything. The tool saw a masterpiece; Googlebot saw a brick wall.

Why SEO Tools Have Built-In Limitations

To build a high-level digital marketing strategy, you have to understand the “Why” behind the data. Tools are limited by three main factors:

- Simulation vs. Reality: AhrefsBot or SemrushBot behaves differently than Googlebot. They don’t have the same “Crawl Demand” logic.

- The “Difficulty” Illusion: Keyword difficulty (KD) is usually just a calculation of how many backlinks the top 10 results have. It ignores search intent, brand authority, and topical relevance.

- Data Latency: Most tools update their databases every few days or weeks. In a fast-moving SERP, that data is already stale by the time you see it.

Tool Metrics vs. Real-World Equivalents

| Metric Name | What the Tool Thinks | The Real-World Truth |

| Keyword Difficulty (KD) | Based on backlink counts of competitors. | Based on intent match, E-E-A-T, and user satisfaction. |

| Health Score | Percentage of technical “best practices” met. | Whether Googlebot can efficiently crawl and index your money pages. |

| Search Volume | An average estimate of monthly queries. | A fluctuating number impacted by seasonality, news, and AI Overviews. |

| Domain Authority | A proprietary strength score of your link profile. | A non-existent metric at Google; they use PageRank and Topical Authority. |

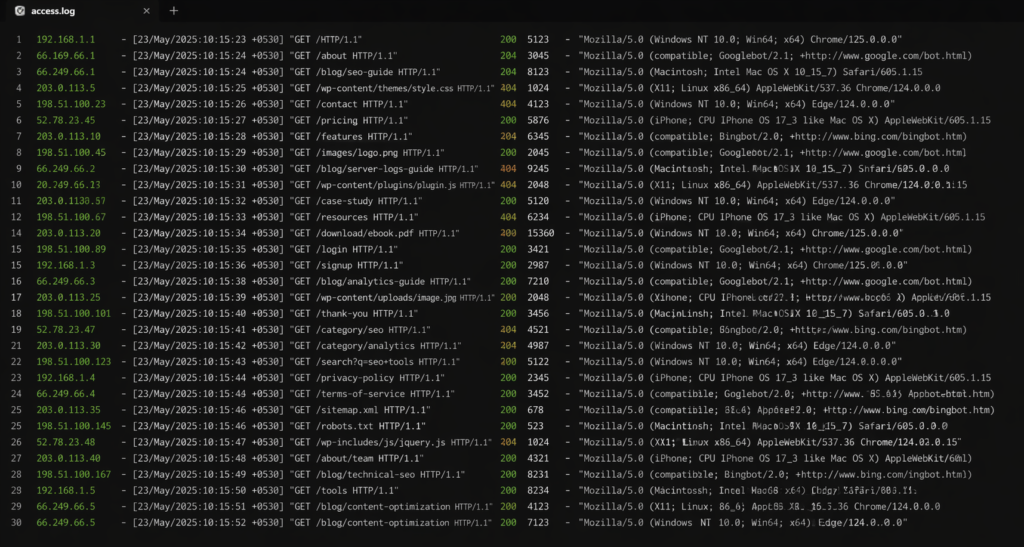

Server Logs: The Only “Ground Truth” Left

If you want to stop guessing, you have to start looking at server logs.

In simple terms, a server log is a diary of every single person and bot that “knocks on the door” of your website. When you analyze these, you aren’t looking at a simulation. You are looking at the actual interaction between your site and Google.

Also Read: SEO vs AI: Will AI Replace Search Engines and Websites?

What Logs Reveal That Tools Miss:

- Crawl Budget Wastage: You might find that Google is spending 60% of its time on your “Privacy Policy” and “Terms of Service” pages instead of your “Best Selling Products.”

- Orphaned Pages: These are pages that have no internal links but Google is still crawling them. This dilutes your authority.

- Mobile vs. Desktop Priority: You can see exactly how much of your crawl is dedicated to the mobile-first index.

Technical SEO without log file analysis is just an educated guess. If you’re not looking at the logs, you don’t actually know if Google is seeing your changes.

Understanding Real User Behavior (The “Why” Behind the Click)

Let’s say you’ve fixed the crawl issues. You’re ranking. The traffic is coming in. But your conversion rate is zero.

This is where the next blind spot lives. Many SEOs think their job ends at the “Click.” In reality, a successful digital marketing funnel requires you to understand user behavior analysis.

Google’s algorithms are increasingly focused on “helpful content.” If a user clicks your result, finds it confusing, and bounces back to the search results (pogo-sticking), Google learns that your page isn’t the best answer.

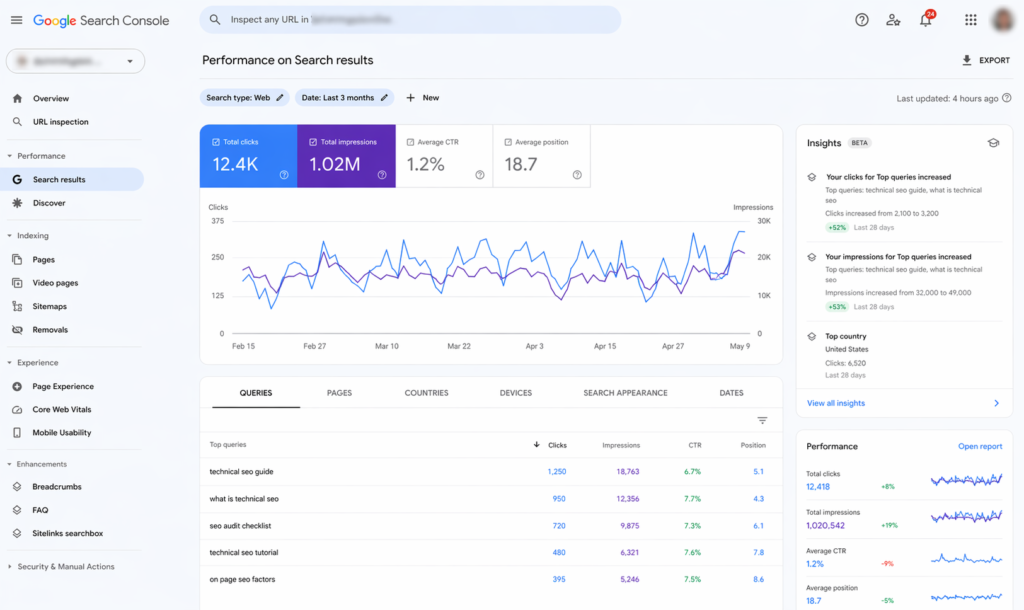

Bridging the Gap with Google Tools

To fix this, you need to master the two pillars of first-party data:

- Google Search Console (GSC): This tells you how you look in the SERP. It shows impressions, clicks, and (most importantly) which queries are actually driving your visibility.

- Google Analytics 4 (GA4): This tells you what happens after the click. Are they engaging? Are they scrolling? Are they converting?

The Data Triangulation Framework: A 5-Layer System

If you want to move toward real data-driven insights, you need to stop looking at data in silos. Use this framework to make every SEO decision:

1. The Infrastructure Layer (Server Logs)

Question: Is Googlebot actually reaching my site?

Action: Use tools like Screaming Frog Log File Analyser to see crawl frequency and status codes.

2. The Visibility Layer (Google Search Console)

Question: How does Google “rank” my relevance?

Action: Look for high-impression/low-CTR keywords. These are your biggest “quick win” opportunities.

3. The Behavior Layer (Google Analytics / Heatmaps)

Question: Are humans happy with the content?

Action: Check your “Engagement Rate” in GA4. If it’s below 40%, your content isn’t meeting the search intent.

4. The Competitive Layer (SERP Analysis)

Question: What is the “Standard of Excellence” for this query?

Action: Don’t just look at keywords. Look at the type of content ranking. Is it video? Is it a listicle? Is it a calculator?

5. The Experimental Layer (A/B Testing)

Question: Does this change actually work?

Action: Change a title tag on 10 pages and leave 10 pages as a control group. Measure the difference in GSC after two weeks.

Real-World Scenarios: Diagnosing Like an Expert

Let’s look at how a “Tool-Led” SEO and a “Data-Led” SEO handle the same problems.

Scenario 1: Traffic is Up, but Revenue is Down

- The Tool-Led SEO: “We need more keywords! Let’s find more high-volume terms and write more blogs.”

- The Data-Led SEO: Looks at GA4. Notices that the new traffic is coming from informational queries that have no commercial intent.

- The Fix: Re-align the content strategy to target “middle-of-the-funnel” keywords that solve a specific problem for the buyer.

Scenario 2: High Rankings, but No Clicks

- The Tool-Led SEO: “The tool says I’m #2. I must be winning.”

- The Data-Led SEO: Looks at the actual SERP. Realizes that between the 4 Sponsored Ads, the AI Overview, and the “People Also Ask” box, the #2 organic result is actually “below the fold.”

- The Fix: Target long-tail queries or optimize for the Featured Snippet to jump above the noise.

Scenario 3: “Crawled – Currently Not Indexed”

- The Tool-Led SEO: “I’ll resubmit the sitemap and pray.”

- The Data-Led SEO: Checks the server logs to see if Googlebot is hitting the page. If it is, they analyze the content against competitors.

- The Fix: They realize the content is “thin” or too similar to another page on the site. They merge the pages to create a single, high-authority resource.

Common SEO Mistakes (The “What Not to Do” List)

If you want to be seen as an expert in the digital marketing space, stop doing these four things:

- Blindly Trusting Tool Scores: A “100% Health Score” doesn’t mean your site is fast, helpful, or authoritative. It just means you don’t have broken links.

- Ignoring the “Middle Man”: The “Middle Man” is the server. If your server is slow or misconfigured, no amount of keyword optimization will save you.

- Optimizing Without Demand: Don’t write a 2,000-word guide on a topic just because a tool says it’s “Easy.” Use Google Trends to see if people actually care about that topic today.

- The “Set it and Forget it” Mentality: SEO is a living ecosystem. Rankings change, intent shifts, and competitors update. If you aren’t testing and tweaking based on user behavior analysis, you are falling behind.

The Future of SEO: From Tools to Systems

The industry is shifting. With the rise of AI-driven search, the old way of “buying a tool and following the checklist” is dying. The future belongs to the “Technical Strategist”—someone who can look at a server log, a GSC report, and a user heatmap and tell a cohesive story.

We are moving from Search Engine Optimization to Search Experience Optimization.

How to Stay Ahead of the Curve:

- Invest in Data Literacy: Learn how to use BigQuery to pull your GSC data. It allows you to see more than the 1,000-row limit in the GSC interface.

- Think Like a User: Spend time on the SERP. Type in your keywords. See what Google is trying to tell you through its features.

- Focus on Information Gain: Don’t just regurgitate what the top 10 results say. Add something new—a study, a unique perspective, or a better tool.

The Master Comparison Table: Navigating the SEO Data Landscape

When you’re building your next SEO audit, use this table to decide which data source to trust for specific tasks.

| SEO Task | Best Data Source | Why? |

| Indexing Issues | Server Logs + GSC | Shows if Google is visiting and if it decided to keep the page. |

| Keyword Research | GSC + Google Trends | Shows what people actually type, not what a tool guesses. |

| Technical Site Health | Server Logs + Screaming Frog | Shows real-time server responses and bot behavior. |

| Content Performance | GA4 (Engagement Rate) | Measures if the content actually solved the user’s problem. |

| Competitor Analysis | SERP Analysis (Manual) | Tools can’t see the “vibe” or “intent” of a competitor’s page. |

| Backlink Profile | Ahrefs / Semrush | This is the one area where third-party tools still reign supreme. |

Conclusion: Logs Show Truth, Tools Show Patterns

Let’s wrap this up with a reality check. There is no “magic button” in SEO. If there were, everyone would be #1.

The biggest blind spot isn’t a lack of tools—it’s a lack of critical thinking. We have become so reliant on software to tell us what to do that we’ve stopped asking why we’re doing it.

The most successful digital marketing campaigns are built on a foundation of “Ground Truth.”

- Logs show you what actually happened.

- Tools show you general patterns and ideas.

- Testing shows you what actually works for your audience.

Stop trying to get a 100/100 score in a tool. Start trying to get a 100/100 in user satisfaction and crawl efficiency. When you align those two, the rankings will follow naturally.

What’s Your Next Move?

Don’t just close this tab and go back to your dashboard. Today, I want you to do one thing: Go into Google Search Console, navigate to “Settings” > “Crawl Stats,” and look at your “Crawl Requests by Response.” If you see anything other than a vast majority of “OK (200)” responses, you’ve found a real-world problem that no “Health Score” could ever fully explain. That is where your real SEO work begins.